Hello {{first_name | AI enthusiast}},

Harvard’s Ash Center questions whether AI can fake moral “crocodile tears,” while OpenAI’s healthcare blueprint pushes HHS and FDA to modernize clinical AI oversight. Meanwhile, Roadrunner lands $27M and Brussels activates the EU AI Act’s enforcement engine.

Scroll down to catch the signals that matter.

Someone just spent $236,000,000 on a painting. Here’s why it matters for your wallet.

Late last year, a Klimt sold for the highest price ever paid for modern art at auction.

An outlier sure, but it wasn't a fluke. U.S. auction sales grew 23.1% in 2025. The $1-5mm segment even grew 40.8% YoY.

Meanwhile, Apollo’s chief economist Torsten Slok said to expect ‘zero in return in the S&P 500 over the coming decade.’

Each environment is unique, but after dot-com, post war and contemporary art grew about 24% annually for a decade. After 2008, about 11% for 12 years.

It’s also had near-zero correlation with the S&P 500 since ‘95.*

Now, Masterworks lets you invest in shares of artworks featuring legends like Banksy, Basquiat, and Picasso.

$1.3 billion invested across over 500 artworks.

28 sales to date.

Net annualized returns on sold works held 12 months+ like 14.6%, 17.6%, and 17.8%.

Shares can sell quickly, but my subscribers can skip the waitlist:

*Investing involves risk. Past performance is not indicative of future returns. See important Reg A disclosures at masterworks.com/cd.

What's in Today?

- 🏥 OpenAI pushes HHS and FDA toward modern clinical AI rules

- 🤝 General Tensor bets big on decentralized AI trading

- 💸 Roadrunner secures $27M to scale AI‑native software

- 🧠 Harvard probes the limits of AI “moral intelligence”

- 🏗️ Policymakers float pauses on AI data center construction

- 🇪🇺 EU activates its AI Act enforcement engine

- ⚖️ Court blocks sanctions on U.N. expert amid political controversy

- 🏛️ White House stance edges toward tougher AI oversight

- 📚 Supreme Court leaves AI‑copyright uncertainty in place

- 👨💻 Chinese court penalizes replacing worker with AI

🏥 OpenAI pushes HHS and FDA toward modern clinical AI rules

OpenAI’s new healthcare policy blueprint urges regulators to modernize oversight of AI tools. The Advisory Board breakdown highlights calls for patient-directed data portability, clinician-supervised AI deployments, and clearer HHS/FDA pathways for higher-risk software used in diagnosis, treatment, and administrative workflows, aiming to reduce burnout and expand access.

Expect accelerating pressure on U.S. health regulators to clarify where clinical AI is allowed, restricted, and continuously monitored.

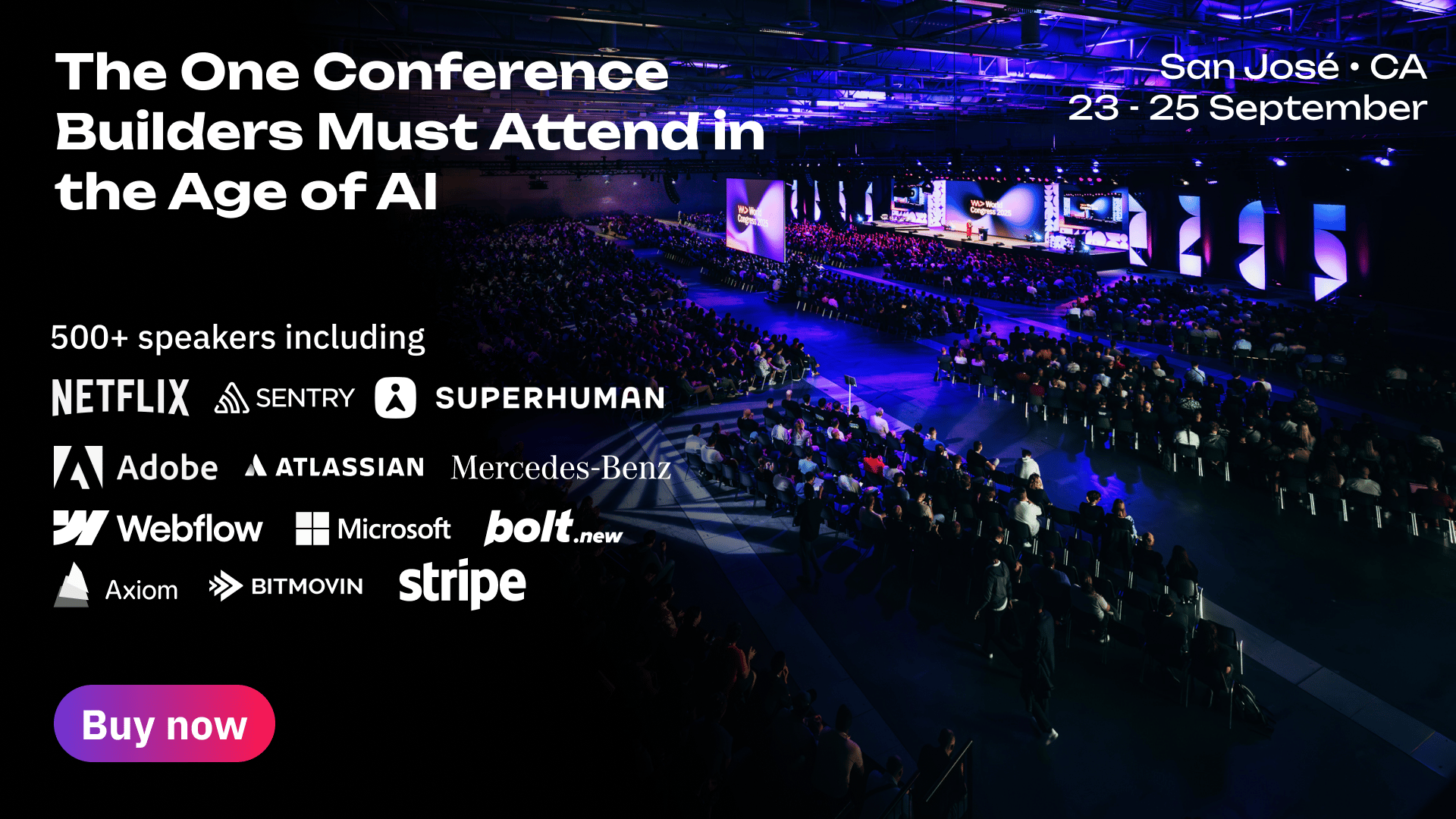

The World's Biggest Dev Event Hits Silicon Valley

From AI and cloud to DevOps and security — WeAreDevelopers World Congress brings the entire modern stack to San Jose. 500+ speakers. 10,000+ developers. One epic September. Use code GITPUSH26 for 10% off.

🤝 General Tensor bets big on decentralized AI trading

General Tensor is acquiring Backprop Finance, a major Bittensor-based decentralized AI trading platform. The deal consolidates trading, liquidity, and infrastructure for tokenized AI workloads, signaling growing institutional interest in crypto-native compute markets and automated strategy execution tied directly to AI model performance and network incentives.

Watch for decentralized AI marketplaces to mature from experimental networks into structured financial infrastructure.

💸 Roadrunner secures $27M to scale AI‑native software

Law firm Goodwin revealed it advised AI-native software company Roadrunner on $27 million in combined seed and Series A financings. The round, involving multiple venture investors, will help Roadrunner expand product development, go-to-market capacity, and enterprise functionality, positioning its AI-driven platform to compete aggressively in data-intensive software categories.

Funding signals sustained investor confidence in vertical AI apps, not just foundational model providers.

🧠 Harvard probes the limits of AI “moral intelligence”

In a Q&A, the Harvard Ash Center interviews researcher Sarah Hubbard about whether models’ apparent moral reasoning reflects genuine understanding or pattern mimicry. The conversation explores failures on edge cases, cultural bias, and how persuasive language can mask ethical blind spots—raising red flags for delegating normative decisions to AI.

Expect governance frameworks to treat AI “moral judgments” as high-risk, requiring explicit human ownership of ethical choices.

Stop re-prompting. Say it right the first time.

Voice-first prompts preserve the nuance you cut when typing. Speak once, paste into any AI tool, get results that don't need a follow-up. 89% of messages sent with zero edits.

🏗️ Policymakers float pauses on AI data center construction

Troutman Pepper outlines how federal, state, and local officials are exploring moratoriums and stricter permitting for AI-focused data centers. Their analysis of emerging proposals highlights concerns over grid capacity, water use, land use, and tax policy, urging operators to engage early with policymakers and communities.

Energy and environmental scrutiny could become a primary bottleneck for scaling AI infrastructure in key regions.

🇪🇺 EU activates its AI Act enforcement engine

The European Commission officially launched the European Artificial Intelligence Board, a new body coordinating national authorities as the EU AI Act moves into enforcement. The Board will issue guidance, harmonize risk categorization, and help ensure consistent supervision across member states, tightening compliance expectations for providers serving European markets.

Companies building or deploying AI in Europe must now operationalize AI Act compliance as a core regulatory obligation, not a future concern.

⚖️ Court blocks sanctions on U.N. expert amid political controversy

A U.S. judge temporarily blocked sanctions targeting U.N. special rapporteur Francesca Albanese, as reported by Democracy Now!. The case centers on whether government actions can restrict an international official’s work and financial access, highlighting mounting legal friction around foreign policy tools and civil liberties.

Expect renewed debate over how far sanctions regimes can go without chilling independent international advocacy and research.

🏛️ White House stance edges toward tougher AI oversight

WJCT Public Media details how shifting White House rhetoric could translate into stronger federal oversight of AI technologies. The piece on evolving regulatory positions describes growing concern over safety, jobs, and national competitiveness, suggesting openness to targeted guardrails rather than purely market-driven approaches.

Policy momentum in Washington is tilting toward more assertive, sector-specific AI rulemaking and enforcement.

📚 Supreme Court leaves AI‑copyright uncertainty in place

The U.S. Supreme Court declined to review a pivotal dispute over copyright protection for AI-generated works. According to a summary from IIPLA, the refusal leaves lower-court rulings intact, reinforcing limits on registering works without meaningful human authorship.

Creative industries must assume AI-only outputs face weak or no copyright protection under current U.S. law.

👨💻 Chinese court penalizes replacing worker with AI

A Hangzhou court ordered a fintech firm to compensate an employee after firing him and substituting AI for his role. As reported by Sixth Tone, the court found the dismissal illegal, signaling early judicial pushback against abrupt algorithmic workforce displacement.

Employers experimenting with AI-driven job restructuring should expect rising legal and reputational risk around unfair terminations.

Questions or want a deeper dive on any of these signals? Hit reply with the trend number you care about most, and we’ll unpack it in the next edition.

Share your unique referral link to a colleague shaping AI strategy.